As the world of DevOps and cloud computing continues to evolve, sustainability is increasingly becoming a core concern for teams striving to reduce waste and improve their efficiency. During LikeMinds Consulting’s latest live webinar, we took a deep dive into how small, sustainable changes in cloud infrastructure can significantly improve the performance and environmental impact of your DevOps workflows.

The session, packed with insights and practical advice, was a fantastic showcase of how technology can be used to drive both operational efficiency and environmental sustainability. In this blog, we’ll recap some of the key points, takeaways, and actionable steps shared by our speakers, so you can start applying these lessons right away.

Meet the Experts: Who’s Driving the Change?

Before diving into the core content of the webinar, let’s take a moment to introduce the talented speakers who shared their expertise and unique insights on sustainable DevOps:

Ganesh Srinivasan: A Kubernetes-certified expert, Ganesh has years of experience working with multiple cloud platforms and is passionate about integrating sustainable practices into the world of cloud computing. His background in environmental engineering offers a unique perspective on how DevOps can help mitigate the environmental impact of modern technologies.

Roopika Ganesh: As an IAM (Identity and Access Management) Automation Engineer, Roopika has more than 6 years of experience in the world of DevOps and AI. Her focus is on building scalable, secure, and sustainable identity management solutions, and in this session, she demonstrated how we can leverage Kube-Green to make IAM systems more energy-efficient.

Tuan Ousman: A DevOps Engineer and certified Kubernetes Administrator, Tuan is skilled at helping enterprises containerize applications and migrate to Kubernetes environments securely. In the webinar, he illustrated how optimized resource usage in Kubernetes directly translates to real-world carbon savings.

The Hidden Environmental Impact of the Cloud: What We Don’t See

Ganesh opened the session with a bold statement: while cloud infrastructure is often praised for its efficiency, its environmental costs are rarely acknowledged. When we think of cloud environments, we think of them as invisible. You click a button to deploy a service, and everything works seamlessly. But behind the scenes, cloud data centers are consuming massive amounts of electricity.

The Carbon Footprint of Cloud Computing

Ganesh pointed out that cloud data centers—the very backbone of the cloud—use staggering amounts of energy. A single data center can use as much electricity as 50,000 homes, and collectively, the cloud industry now emits more CO2 than the airline industry. While cloud computing is undeniably more energy-efficient than traditional on-premise infrastructure, it still contributes significantly to carbon emissions.

This phenomenon is often overlooked because cloud infrastructure feels intangible. When deploying cloud resources, we don’t see the physical infrastructure we’re relying on—there are no tangible servers, no racks of hardware we need to maintain. The result is that the environmental cost of cloud computing is hidden from the typical cloud user.

The Long-Term Risk of Ignoring Sustainability

Ganesh warned that the real risk of neglecting sustainability in DevOps is scaling up cloud systems without considering energy use. As the cloud industry grows globally, so does the demand for energy—much of which still comes from fossil fuels. While renewable energy sources like solar and wind are on the rise, we are still dealing with old infrastructure that relies on fossil fuels, and the environmental costs are stacking up.

Ganesh’s suggestion for DevOps teams? Start treating sustainability as an integral part of cloud planning. This includes being mindful of environmental costs when designing infrastructure and starting to implement changes that reduce energy waste.

Optimizing IAM Environments: The Power of Kube-Green

After Ganesh set the stage with the environmental impact of cloud infrastructure, Roopika Ganesh took over to dive into how IAM (Identity and Access Management) environments can be optimized. IAM systems are the backbone of cloud security, and they’re often running 24/7—whether or not anyone is actively using them.

Roopika explained that non-production environments like development, QA, or staging environments are often replicated at full scale of production environments, even though they’re not used nearly as much. During off-hours—nights, weekends, and holidays—these systems continue to run, consuming unnecessary resources.

Here’s where Kube-Green comes into play. It allows organizations to scale down non-production environments during idle times without compromising the security or availability of critical services. Roopika walked us through a live demo where she demonstrated how easy it is to implement Kube-Green for non-production IAM environments.

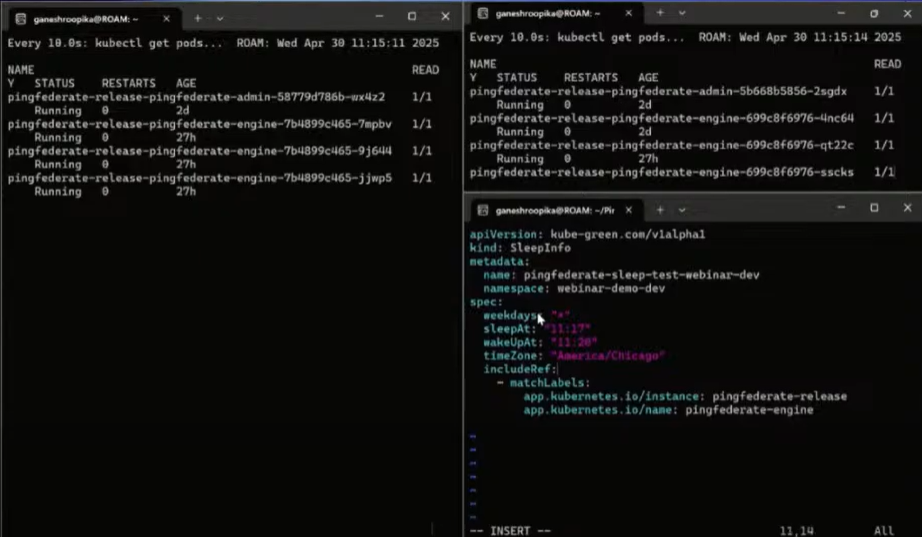

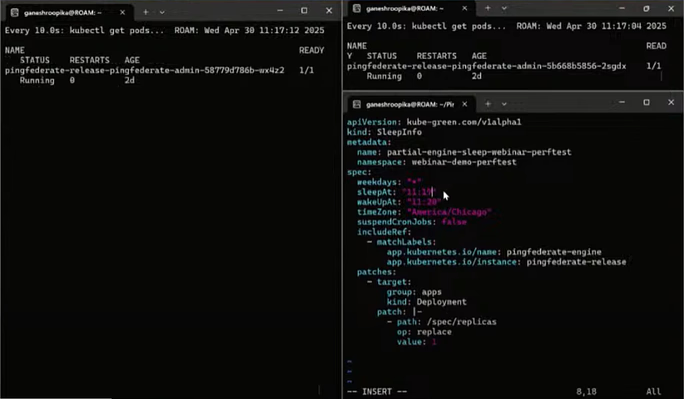

Real-Life Demo: Kube-Green in Action

During the demo, Roopika showed us two IAM environments—performance and dev. For the performance environment, she implemented a partial scale-down approach, reducing the number of pods during off-hours while still ensuring critical services were up and running. For the dev environment, she took a more aggressive approach, scaling the pods down completely during nights and weekends when the environment was idle.

She highlighted that no code changes were required—just simple YAML configuration to specify off-hours and scale-down times. The best part? No disruption to the developers working on the system.

Turning Cloud Resource Savings into Real-World Carbon Impact

Next, Tuan Ousman took the stage to explore how we can convert the saved cloud resources into real-world carbon impact. He explained that when we scale down unused pods and resources, we aren’t just saving CPU or memory; we are reducing energy consumption in the underlying cloud infrastructure.

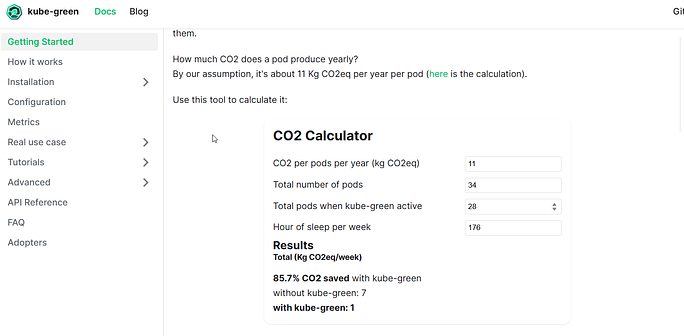

Tuan introduced the CO2 calculator—a tool that helps organizations estimate how much carbon they’re saving by optimizing their cloud usage. He took the saved resource data from Roopika’s demo and showed us how it translates into measurable CO₂ emissions.

For example, by reducing just a few pod-hours per day, we could avoid over 200 pounds of CO₂ emissions per year. When scaled up across environments and months, this small change becomes a significant reduction in carbon output.

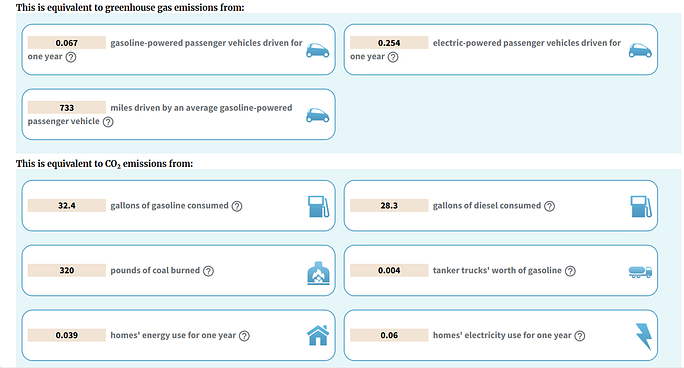

Tuan also used the EPA Greenhouse Gas Equivalencies Calculator to put these savings into perspective. For instance, the saved CO₂ emissions could be compared to the energy needed to charge over 15,000 smartphones.

Here is the link to the full webinar recording: Watch the complete session to learn how to optimize Cloud DevOps practices for greater efficiency, intelligence, and environmental sustainability.

The Power of Small Actions

The key takeaway here was that small, incremental changes—like scaling down pods during off-hours—can have a big impact when measured over time. Tuan’s data-driven approach made it clear that every pod-hour saved translates directly to measurable carbon savings.

Key Takeaways: Making Sustainability Part of Your DevOps Culture

From this webinar, it’s clear that sustainable DevOps isn’t something that needs to be implemented in the distant future. We can start making changes today, and they don’t need to be complex.

Here are some key takeaways:

Cloud Sustainability Starts with Awareness: The first step is understanding the hidden environmental impact of our cloud infrastructure.

Optimization Doesn’t Have to Be Hard: Start small—optimize non-production environments by scaling them down during idle times. It’s easy to implement and requires no code changes.

Real-World Impact: Optimizing resources in your cloud environment isn’t just about saving money—it’s about making a real, measurable impact on the environment. Tools like Kube-Green can help you track and reduce your carbon footprint in tangible ways.

Conclusion: Sustainable DevOps Starts Today

The webinar made one thing crystal clear: Sustainable DevOps is something we can begin implementing today. From optimizing IAM environments to calculating the real-world carbon savings of resource reductions, the tools and strategies shared in this session offer actionable insights that can help every DevOps team work more efficiently and sustainably.

If you missed the live session, don’t worry. There’s no time like the present to start considering how greener cloud practices can enhance your own workflows. By starting small, treating sustainability as part of your core cloud strategy, and measuring the results, you’ll not only improve your operational efficiency but also contribute to a healthier planet.

With that, let’s keep the conversation going. Start with simple steps, like auditing your non-prod environments, and soon you’ll see just how impactful small changes can be. Let’s work together to make DevOps both smarter and greener.